TechOps Examples

Hey — It's Govardhana MK 👋

Welcome to another technical edition.

Every Tuesday – You’ll receive a free edition with a byte-size use case, remote job opportunities, top news, tools, and articles.

Every Thursday and Saturday – You’ll receive a special edition with a deep dive use case, remote job opportunities, and articles.

👋 👋 A big thank you to today's sponsor WARPSTREAM

Diskless, Kafka-Compatible Streaming That Runs in Your Cloud

WarpStream BYOC is a diskless, stateless Kafka-compatible streaming platform. No local disks, no inter-AZ fees, no broker rebalancing. Your data stays in your own cloud. Agents auto-scale automatically.

Robinhood uses it for logging. Cursor runs AI telemetry on it. Grafana Labs streams at 7.5 GiB/s with zero cross-AZ fees. Change one URL, keep all your existing clients. Learn more, or sign up for free.

Get $400 in credits that never expire. No credit card required to start.

👀 Remote Jobs

New Era Tech is hiring a Sr. Azure Infrastructure Engineer

Remote Location: Worldwide

Ideaware is hiring a Lead DevOps Engineer

Remote Location: Worldwide

Powered by: Jobsurface.com

📚 Resources

Looking to promote your company, product, service, or event to 56,000+ Cloud Native Professionals? Let's work together. Advertise With Us

🧠 DEEP DIVE USE CASE

How to Manage Kubernetes for Netflix Like High Traffic OTT Platforms

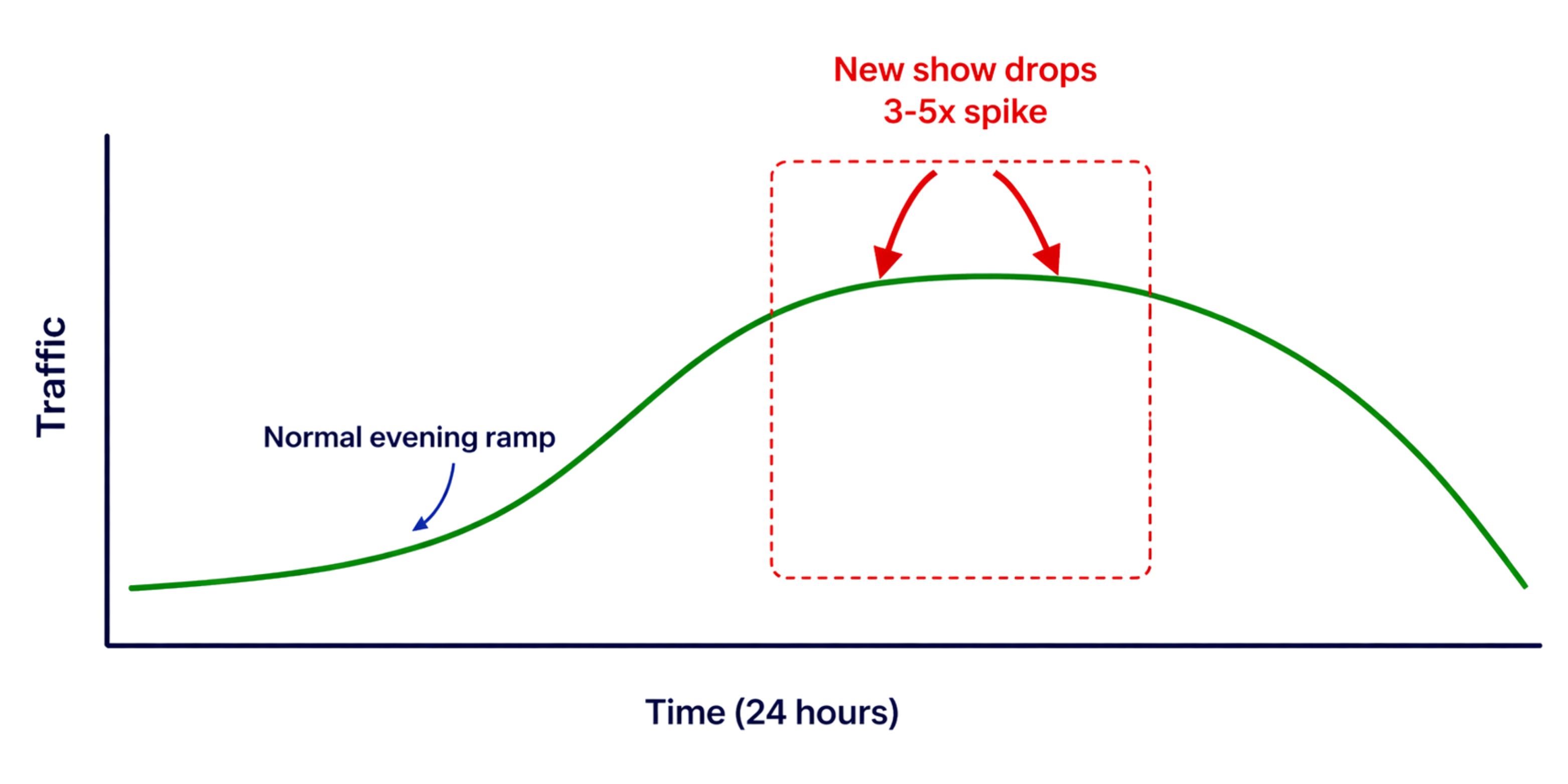

Netflix serves 325+ million subscribers across 190 countries as of early 2026. In North America alone, it drives about 15 percent of downstream internet traffic, with spikes reaching 3 to 5 times baseline within 30 minutes of major releases. This requires infrastructure that is not just large, but elastic, self healing, and able to degrade gracefully under extreme load. These architecture lessons come from years of operating at scale, where every weak assumption in distributed systems design gets exposed.

The Traffic Profile of an OTT platform

Before designing the architecture, you need to understand what makes OTT traffic unusual compared to a standard API driven application.

OTT traffic has four characteristics that drive every architectural decision. It is intensely bursty around content release events. It is geographically distributed across hundreds of countries with different latency profiles. It mixes very different workload types: lightweight API calls for catalog browsing, stateful session management for authentication, compute heavy video transcoding, and ML inference for recommendation engines. And it has zero tolerance for degradation because a buffering spinner translates directly into subscriber churn.

Every Kubernetes configuration decision that follows is a response to one or more of these characteristics.

Multi Cluster Topology

The application should not run on one large cluster, ideally should run as a federation of purpose built clusters across multiple regions, each handling a specific workload class. This isolation is the ctitical element.

Each region runs three dedicated clusters. The API cluster handles catalog browsing, authentication, and session management. The Media cluster handles video manifest delivery, DRM licensing, and subtitle serving. The ML/GPU cluster runs recommendation inference and personalization. Each cluster spans three availability zones for redundancy.

The blast radius principle drives this separation. When a recommendation model update causes GPU memory exhaustion, it crashes the ML cluster but the API and Media clusters are completely unaffected. Users keep streaming. The separation is for containing failure.

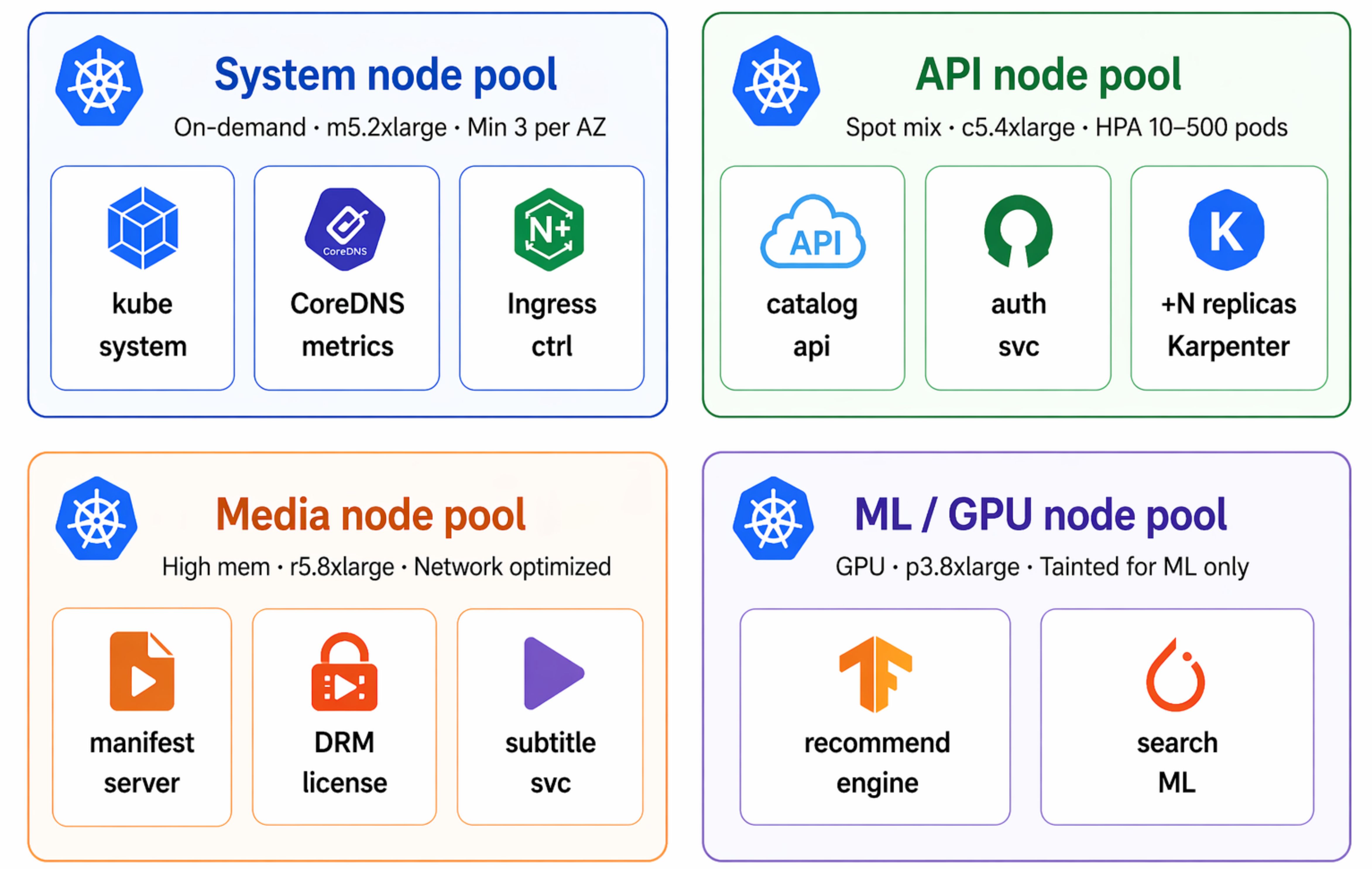

Node Pool Architecture: Matching Hardware to Workload

Within each cluster, different workloads have radically different hardware requirements. Running all workloads on the same node pool is a waste at best and a performance bottleneck at worst.

The system pool runs on on-demand instances with at least three nodes per availability zone, hosting Kubernetes core components, CoreDNS, ingress, and observability. These are protected from eviction using PriorityClass system cluster critical.

The API pool mixes on demand and spot instances via Karpenter. Since APIs are stateless and restart tolerant, they run well on spot instances that are 60 to 80 percent cheaper. Karpenter selects across multiple instance types to improve availability and reduce interruptions.

The media pool uses high memory, network optimized instances for video manifests, DRM, and subtitles. Nodes are tainted with workload equals media NoSchedule to prevent other workloads from using them.

The ML pool runs on GPU instances tainted with workload equals ml NoSchedule, ensuring only ML workloads with matching tolerations use these expensive resources.

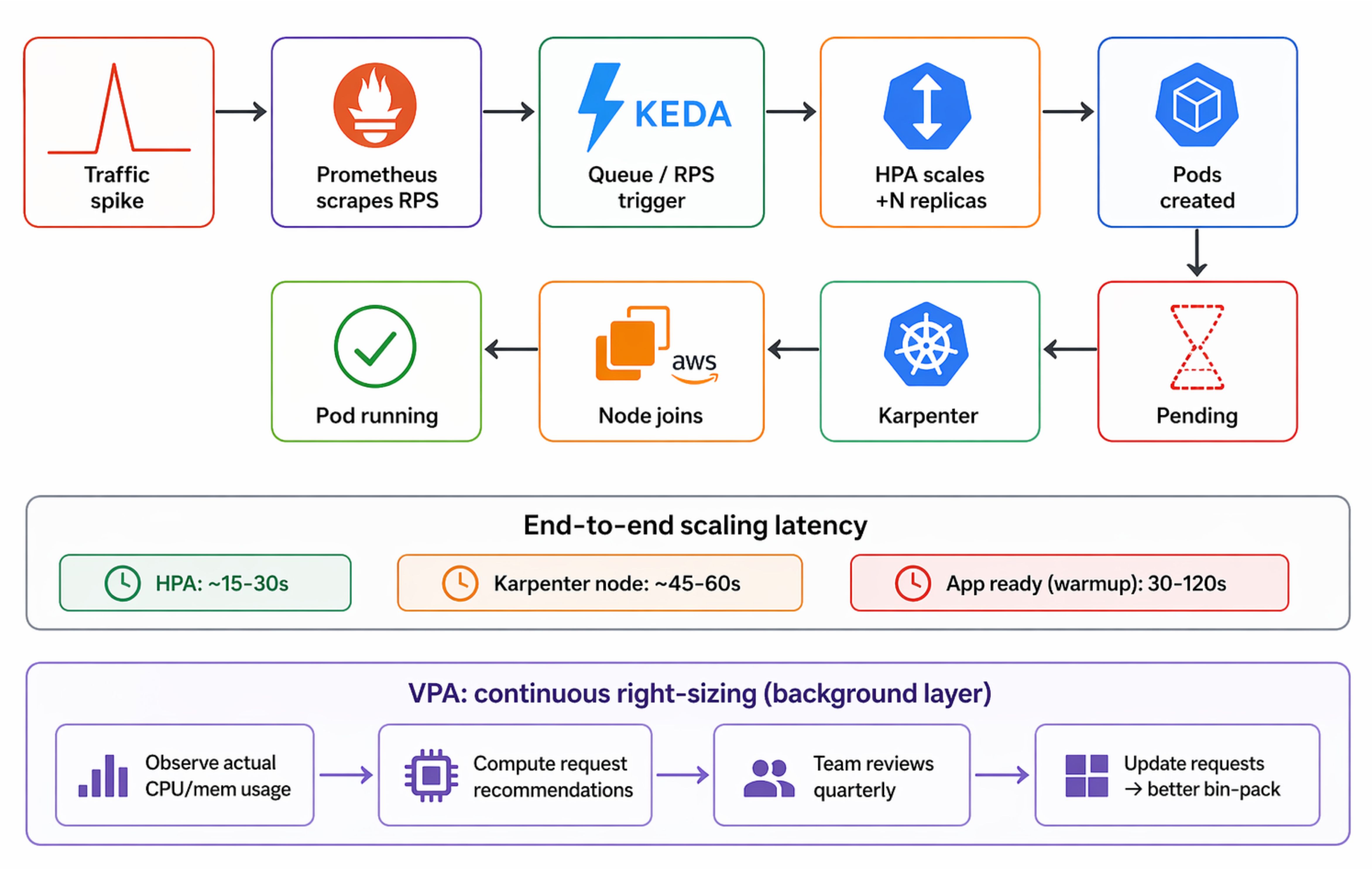

The Autoscaling Signal Chain: from request to pod to node

Understanding how a user traffic spike propagates through all three layers of the autoscaling stack is essential for tuning the system correctly.

🔴 Get my DevOps & Kubernetes ebooks! (free for Premium Club and Personal Tier newsletter subscribers)

Upgrade to Paid to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

UpgradePaid subscriptions get you:

- Access to archive of 250+ use cases

- Deep Dive use case editions (Thursdays and Saturdays)

- Access to Private Discord Community

- Invitations to monthly Zoom calls for use case discussions and industry leaders meetups

- Quarterly 1:1 'Ask Me Anything' power session