TechOps Examples

Hey — It's Govardhana MK 👋

Welcome to another technical edition.

Every Tuesday – You’ll receive a free edition with a byte-size use case, remote job opportunities, top news, tools, and articles.

Every Thursday and Saturday – You’ll receive a special edition with a deep dive use case, remote job opportunities, and articles.

Tech moves fast, but you're still playing catch-up?

That's exactly why 150K+ engineers working at Google, Meta, and Apple read The Code twice a week.

Here's what you get:

Curated tech news that shapes your career - Filtered from thousands of sources so you know what's coming 6 months early.

Practical resources you can use immediately - Real tutorials and tools that solve actual engineering problems.

Research papers and insights decoded - We break down complex tech so you understand what matters.

All delivered twice a week in just 2 short emails.

Sign up and get access to the Ultimate Claude code guide to ship 5X faster.

👀 Remote Jobs

Canonical is hiring a Cloud Solutions Architect

Remote Location: Worldwide

VRCHAT is hiring a Lead DevSecOps Engineer

Remote Location: Worldwide

Powered by: Jobsurface.com

📚 Resources

Looking to promote your company, product, service, or event to 54,000+ Cloud Native Professionals? Let's work together. Advertise With Us

🧠 DEEP DIVE USE CASE

How to Design Microservices Architecture on AKS

When people talk about microservices on Kubernetes or AKS, many jump straight into clusters, pods and CI/CD pipelines.

But the real design actually starts one layer before that.

At the core, a microservices system simply means breaking one big application into multiple small services. Each service handles a specific business capability and runs independently.

Users usually enter the system through an Application Gateway, which routes the request to the correct service. For example:

Service A may handle users

Service B may handle orders

Service C may handle payments

Service D may handle notifications

Each service typically owns its own database, sometimes SQL, sometimes NoSQL depending on the need. This avoids tight coupling between services. Since many services are running and talking to each other, two things also become important:

Observability to track logs, metrics and traces

Management and orchestration to run, scale and maintain the services

This is the basic structure most microservices systems start with. Now the next question naturally comes.

How do we run all these services reliably across machines without managing servers manually?

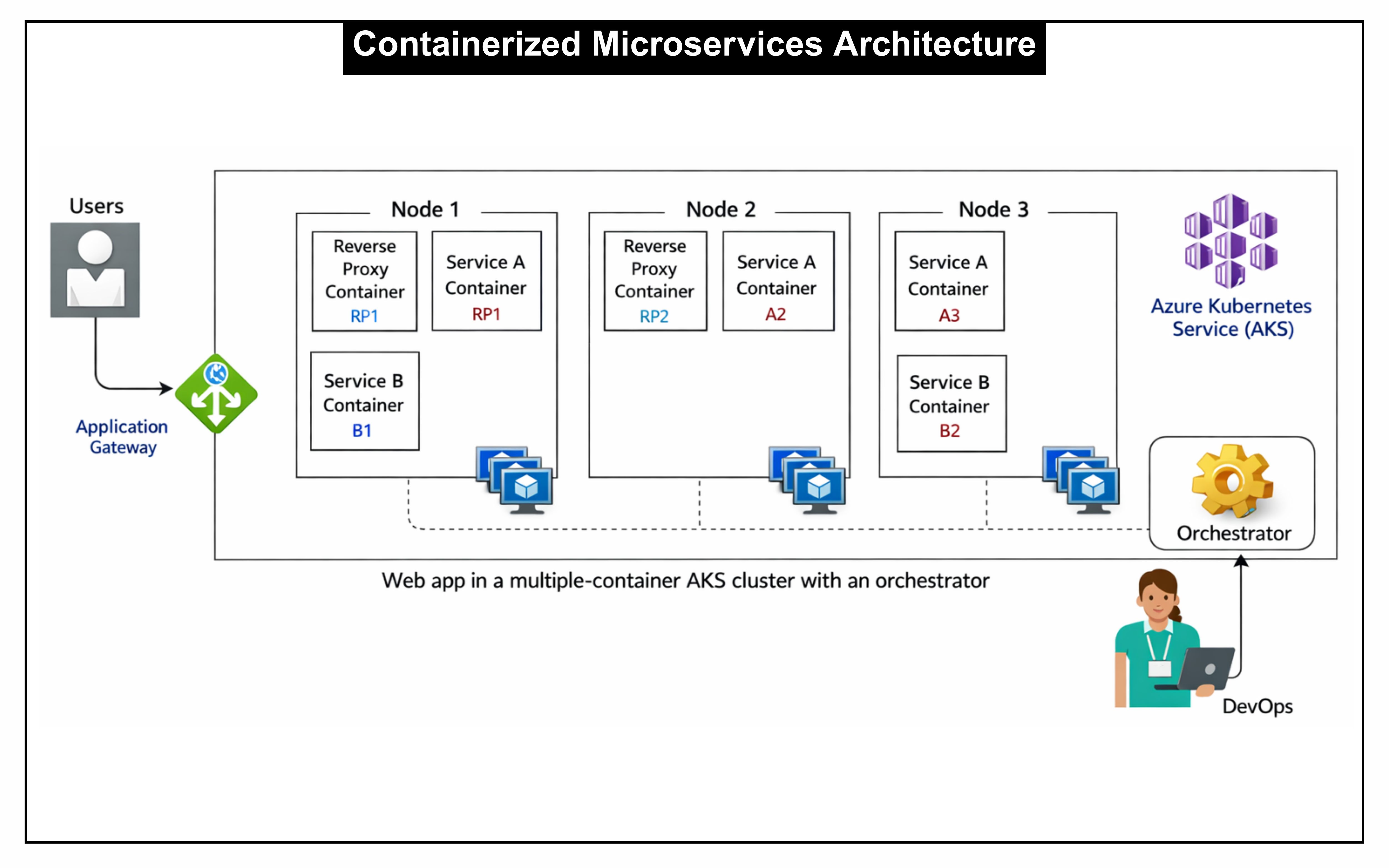

Containerized Microservices Architecture

If every service runs directly on VMs or servers, things quickly become messy. Different runtime versions, dependency conflicts, and manual scaling start showing up. That is why most modern microservices are packaged as containers.

Containers give us three important benefits:

consistent runtime environment

easy deployment across machines

simple horizontal scaling

Each microservice becomes a container image. When the system runs, multiple instances of those containers can run across different machines.

In a typical setup, several machines form a cluster. Each machine acts as a node, and containers are scheduled across these nodes.

So instead of: One service → one server

we get something more flexible:

Service A → multiple containers across nodes

Service B → multiple containers across nodes

This improves both availability and scalability.

For example:

Node 1 may run Service A and Service B containers

Node 2 may run another instance of Service A and the reverse proxy container

Node 3 may run additional Service A and Service B instances

If one node fails, the system can still continue serving requests from other nodes.

Another important piece here is the reverse proxy layer. This container typically handles routing traffic to the correct service instance running inside the cluster.

But managing containers across many nodes manually would still be painful.

Someone has to decide:

where containers should run

when to start new ones

when to restart failed ones

how to scale services automatically

That responsibility is handled by an orchestrator. In modern cloud environments, this orchestration layer is most commonly handled by Kubernetes.

Now the architecture becomes even more structured. In the next phase we see how all of this fits together when running microservices directly on AKS with Azure services around it.

A gift from me to you 🎁

🔴 Practical Linux Guide for DevOps Engineers - Get better at Linux with practical concepts that actually matter in DevOps.

It’s designed to be a reference you can come back to whenever you need clarity.

Upgrade to Paid to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

Paid subscriptions get you:

• Access to archive of 250+ use cases

• Deep Dive use case editions (Thursdays and Saturdays)

• Access to Private Discord Community

• Invitations to monthly Zoom calls for use case discussions and industry leaders meetups

• Quarterly 1:1 'Ask Me Anything' power session